Why do edtech folk react badly to scepticism? Part 2: Confirmation bias

Feb 23, 2016

In Part 1 I explored the concept of vested interest and how it could lead us to make decisions and react in ways which might, to others, appear irrational. This post address another predictable way we make mistakes: the confirmation bias.Confirmation bias, the tendency to over value data which supports an pre-existing belief, is something to which we all routinely fall victim. We see the world as we want it to be, not how it really is. Contrary to some of the accusations levelled at me, I don't hate technology. Far from it. I'm just sceptical about unbridled enthusiasm. Technology might help in certain circumstances and in others it might not. Because of the costs involved I'm cautious about recommending technological solutions, but two examples of tech in education which I think are worth a punt are Colin Hegarty's maths site and Chris Wheadon's comparative judgement assessment system.

But if you're already a true believer in the benefits of edtech - or anything else for that matter - you will tend not to be very critical of evidence which says edtech is great and completely scornful of any suggestion that it's not all that. All too often, technology enthusiasts get excited by the product and then start thinking about how to use it in the classroom. The most common, and most worrying, effect of confirmation bias is when enthusiastic teachers draw the conclusion, "Well, it works for me and my students!"

Does it? How do you actually know? Often we're guilt of taking feedback from dubious sources. We notice that it feels good. We notice that students seem to enjoy lessons more and we conclude, erroneously, that this means what we're doing is working. In order to know whether an intervention was working we'd have to design a fair test with a control group and find a way to reliably measure the progress of both groups to see which one actually made more progress as opposed to which one appeared to make more progress.

This isn't a hypothetical problem. Much of what scientists have discovered about how we really learn as opposed to how we think we learn is counter-intuitive. In the foreword to What If Everything You Knew About Education Was Wrong? Robert Bjork wrote:

That we tend to have a faulty mental model of how we learn and remember has been a source of continuing fascination to me. Why are we misled? I have speculated that one factor is that the functional architecture of how we learn, remember, and forget is unlike the corresponding processes in man-made devices. We tend not, of course, to understand the engineering details of how information is stored, added, lost, or over-written in man-made devices, such as video recorder or the memory in a computer, but the functional architecture of such devices is simpler and easier to understand than is the complex architecture of human learning and memory. If we do think of ourselves as working like such devices, we become susceptible to thinking, explicitly or implicitly, that exposing ourselves to information and procedures will lead to their being stored in our memories—that they will write themselves on our brains, so to speak—which could not be further from the truth.He goes on:

What we can observe and measure during instruction is performance; whereas learning, as reflected by the long-term retention and transfer of skills and knowledge, must be inferred, and, importantly, current performance can be a highly unreliable guide to whether learning has happened. In short, we are a risk of being fooled by current performance, which can lead us, as teachers or instructors, to choose less-effective conditions of learning over more-effective conditions, and can lead us, as learners ourselves, to prefer poorer conditions of instruction over better conditions of instruction.It might well appear that our interventions ‘work’ but what effect are they actually having? If we’re content to merely raise pupils’ current performance then we’ll see plenty of evidence to support our beliefs. And when we don’t see the evidence we expect, we’re happy to ignore these occasions as an ‘off day’ or worse, evidence that another teacher isn’t up to snuff if they can’t teach using the latest gimmickry. When a teacher’s practice is held up as a model of ‘best practice’ we tend to ‘cherry-pick’ those bits we’re comfortable with, or already know something about, and to ignore anything unfamiliar, difficult or strange.

The disconnect between what we can observe in our lessons and what occurs inside students' minds is why we need well designed research. There's been a fair bit of research into the effects of technology on learning and as far as I can see, the jury's still out. The Education Endowment Foundation suggest digital technology provides moderate gains for moderate cost. That looks promising, so let's see what it means in practice.

Evidence suggests that technology should be used to supplement other teaching, rather than replace more traditional approaches. It is unlikely that particular technologies bring about changes in learning directly, but different technology has the potential to enable changes in teaching and learning interactions, such as by providing more effective feedback for example, or enabling more helpful representations to be used or simply by motivating students to practise more.OK, so maybe if technology "has the potential to enable changes in teaching and learning interactions" we should dive right in? Well first, lets consider the costs:

The costs of investing in new technologies are high, but they are already part of the society we live in and most schools are already equipped with computers and interactive whiteboards. The evidence suggests that schools rarely take into account or budget for the additional training and support costs which are likely to make the difference to how well the technology is used. Expenditure is estimated at £300 per pupil for equipment and technical support and a further £500 per class (£20 per pupil) for professional development and support. Costs are therefore estimated as moderate.. Expenditure is estimated at £300 per pupil for equipment and technical support and a further £500 per class (£20 per pupil) for professional development and support. Costs are therefore estimated as moderate.This estimate of £300 per pupil aggregates all the different things which might be meant by digital technology: clearly the extravagant the kit, the greater the expense. Are there any other costs? Well, there's also the cost on teachers' time. The EEF allude to the problems here when they say, "schools rarely take into account or budget for the additional training and support costs which are likely to make the difference to how well the technology is used." We should consider whether the costs of buying in some new kit and investing in the effort to train everyone in how to use it would be a better use of resources than spend that time and money on something else. This is the principle of opportunity cost. What is the likely impact of the best foregone choice and how does that compare against the costs of implementing the choice you actually make?

There are also some other considerations. Technology is not an end in itself - what is it you think edtech can do? New technology does not automatically lead to increased attainment, so are you clear on how and why your investment will improve learning? If technology is not supporting students to work harder, for longer or more efficiently to improve their learning, what is it doing? Sure it's motivating to have something new and shiny to play with, but this is not going to not going to automatically translate into better outcomes. In this report on The Impact of Digital Technology on Learning the authors conclude that "it is clear technology alone does not make a difference to learning."

These are all complex issues and it's really not good enough to take the position that edtech is de facto good. Before we implement any new system or strategy, especially one which will affect every teacher and student in a school (such as a 1-1 device policy) we should ask these five questions:

- What evidence is there to suggest the intervention will work as expected?

- What problem is being solved and what is supposed to improve?

- How will we know if things are getting better?

- When is this improvement is expected?

- What will happen if the goal is, or isn’t met?

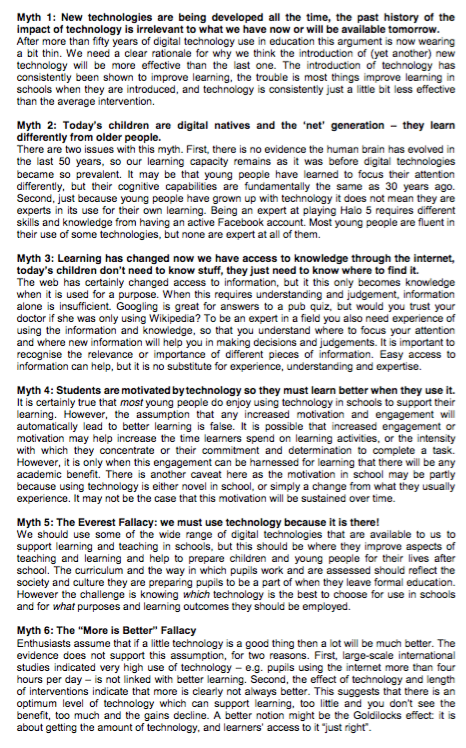

[caption id="attachment_9267" align="aligncenter" width="466"]

Postscript from The Impact of Digital Technology on Learning[/caption]

Postscript from The Impact of Digital Technology on Learning[/caption]

The Learning Spy Substack is a sharp, provocative dispatch from the front lines of education, where ideas are tested, myths are challenged, and nothing is taken for granted.

Join me on Substack